Improving Gestural Communication for Distributed Tables by Visualizing Gesture Height

Description

Gestural communication – particularly pointing as a deictic reference – is ubiquitous when people work together on tables in the real world. Pointing reduces the complexity of verbal communication by allowing people to easily indicate objects, areas, or paths through simple gestures. When collaboration happens on distributed tables, however, it is much more difficult to remotely convey natural pointing gestures. Although digital embodiments can be employed (e.g., telepointers or video images), current techniques are inadequate for conveying the subtleties of deictic gesture.

In particular, typical embodiments do not show the height of a gesture above the table. People use height for several things when they construct a pointing gesture: to make clear the target of the gesture (e.g., putting a finger on the object of interest); to indicate different types of gesture (e.g., indications of paths versus general areas); to show different qualities in the gesture (e.g., a high gesture might be used to indicate less specificity); or to indicate that the gesture points to a location that is out of reach or off the table. Without a representation of gesture height, pointing-based gestural communication on distributed tables is prone to errors, misunderstandings, and interpretation difficulty.

Researchers in tabletop groupware have recognized some of these problems, and have suggested a few embodiment designs that show at least some aspects of height. However, these designs are primarily concerned with showing surface touches, rather than the full range of height above the table, and their effectiveness has not been evaluated in detail. In this paper, we investigate three questions that need to be answered in order to better understand the design of height visualizations:

- Accuracy: does height information improve people’s ability to determine the type or target of a gesture?

- Expressiveness: can height visualizations reliably convey qualities such as specificity, confidence, and emphasis?

- Usability: can people make use of height visualizations in realistic work, and do they prefer these representations?

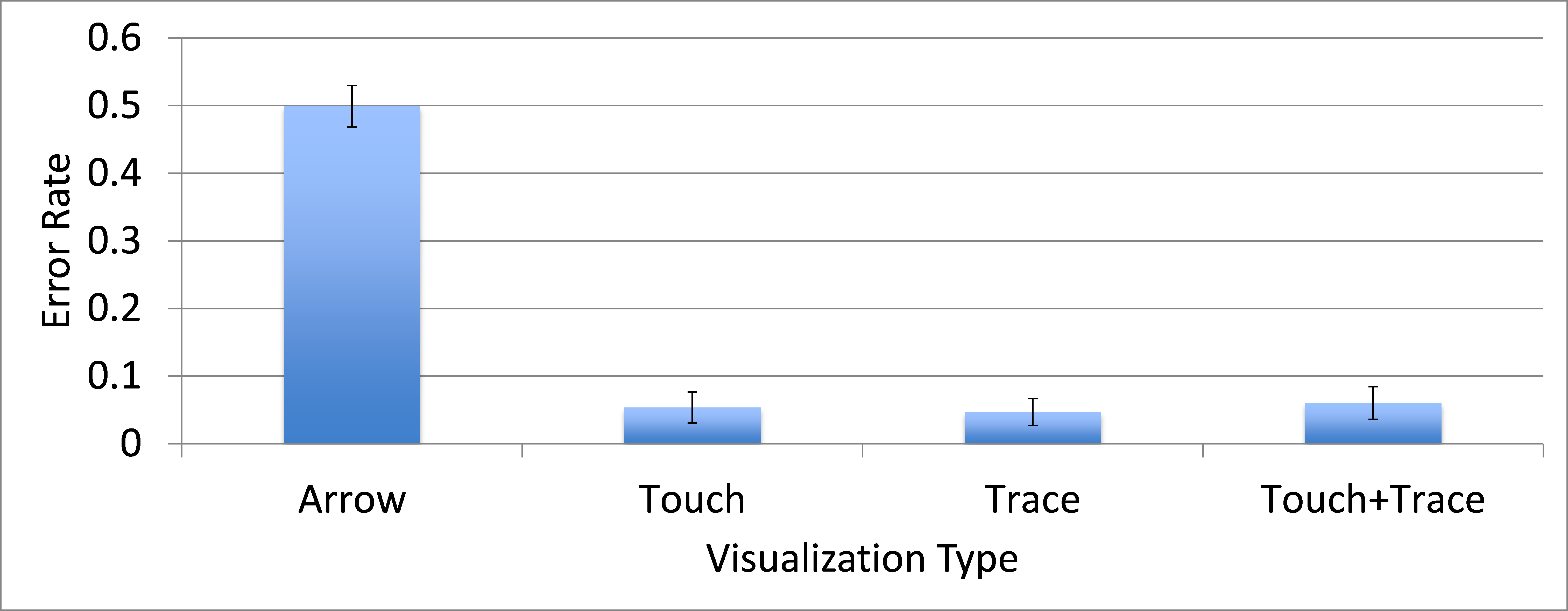

We answered these design questions with three studies. The first experiment showed that representing touch and hover significantly improve people’s ability to determine both the target of the gesture and the type of gesture. The second study showed that people use height visualizations to interpret a gesture’s specificity, confidence, and emphasis, and showed that these interpretations are consistent with the ways that people see real-world gestures. The third study looked at the ways that people use height representations in realistic collaboration, and showed that people quickly make use of the additional height information in their deictic gestures, that the height-augmented embodiments caused no new usability problems, and that the augmented versions were strongly preferred by users. This work makes two main contributions. First, we demonstrate several visualizations for showing key elements of gesture height, designs that refine and improve on previous solutions. Second, we provide a wide variety of evidence for the value of representing height in remote tabletop embodiments: we are the first to show that height visualizations can improve interpretation accuracy, can improve the expressiveness of remote gestures, and can be quickly learned and used in realistic collaboration. The design information and empirical evidence that this work provides can greatly help to improve the subtlety and precision of gestural communication on remote tables.

Images and Videos

Contact indicator and trace

Height indicator

Accuracy results for different visualization types

Publications

- Genest, A., and Gutwin, C., Evaluating the Effectiveness of Height Visualizations for Improving Gestural Communication at Distributed Tables, Proceedings of ACM CSCW 2012, 519-528.

Partners

CFI – NSERC – SurfNet

|

|

|