Surface-Based Collaborative Text Analysis through Descriptive Rendering

Description

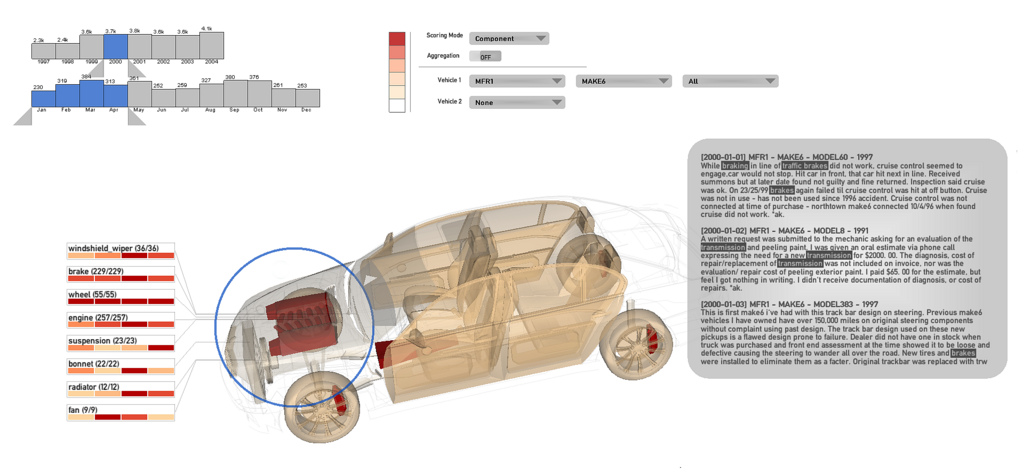

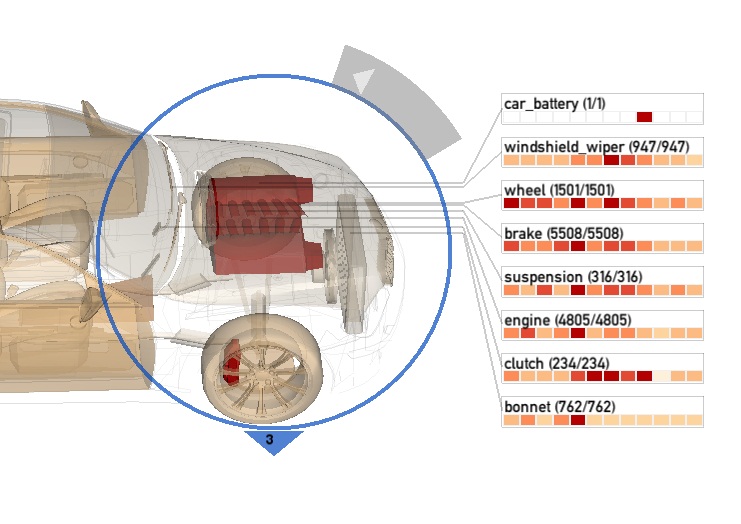

Many text documents contain real-world dimensions such as words describing physical objects and spatial relations. However, text visualizations are often abstract in nature and present visuals that are out of context with real-life expectations. We explore a visualization approach which reconstructs the subject matter from physical entities within the text document to match their real-world counterparts. Furthermore, additional attributes (such as frequency) relating to each physical entity are used as rendering parameters to create an information-rich visual, where important items are emphasized with graphical effects to attract the attention of viewers.

Our visualization prototype is designed for a large multitouch display. We envision a walk-up-and-use scenario such as an office meeting setting, or as an ambient display deployed in a public space. A set of single and multitouch gestures allow viewers to interactively explore the dataset using real-world metaphors: examining a 3D object and a lens-like tool based on using a magnifying-glass. The interface combines touch interaction designed for working with the 3D model with interaction techniques designed for working with the 2D toolglass and filter widgets in a seamless set of gestures.

We apply the approach to visualize a database of vehicle complaints with the goal of helping people investigate reliability and safety issues. Where conventional systems simply return a list of text-based reports, we summarize and encode these data onto a 3D vehicle model. People can perform functions such as trend analysis, comparing different make and models of vehicles, and investigate possible causal issues.

As a side benefit of this SurfNet-funded project, we have developed a set of heuristics to improve the accuracy of selection and navigation on large multitouch displays which use vision-based sensors, and will make these heuristics available to other network researchers on the research project page at http://vialab.science.uoit.ca/

An evaluation study showed promising results: study participants were able to interpret the visualization and use it to aid their analysis and decision-making. In particular, they enjoyed interacting with a familiar form and the interaction possibilities provided by the lens and 3D visualization.

Researchers

- Christopher Collins, University of Ontario Institute of Technology (Supervisor)

- Meng-Wei (Daniel) Chang, University of Ontario Institute of Technology (Student)

Publications

Exploring Entities in Text with Descriptive Non-photorealistic Rendering, To appear in Proceedings of the IEEE Pacific Visualization Symposium (PacificVis), Feb. 2013.