KinectArms

Description

Gestures are a ubiquitous part of human communication over tables, but when tables are distributed, gestures become difficult to capture and represent. There are several problems: extracting arm images from video, representing the height of the gesture, and making the arm embodiment visible and understandable at the remote table. Current solutions to these problems are often expensive, complex to use, and difficult to set up. We have developed a new toolkit – KinectArms – that quickly and easily captures and displays arm embodiments. KinectArms uses a depth camera to segment the video and determine gesture height, and provides several visual effects for representing arms, showing gesture height, and enhancing visibility. KinectArms lets designers add rich arm embodiments to their systems without undue cost or development effort, greatly improving the expressiveness and usability of distributed tabletop groupware.

Images and Videos

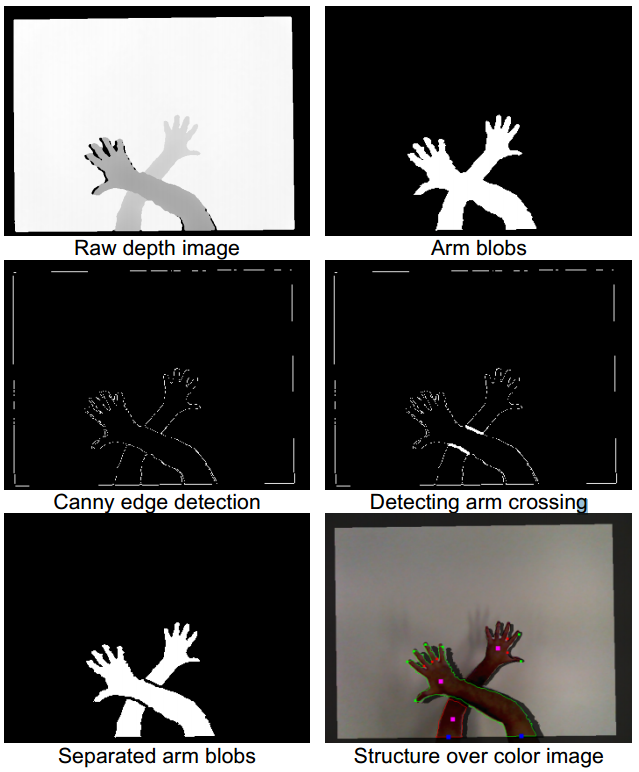

KinectArms image processing steps

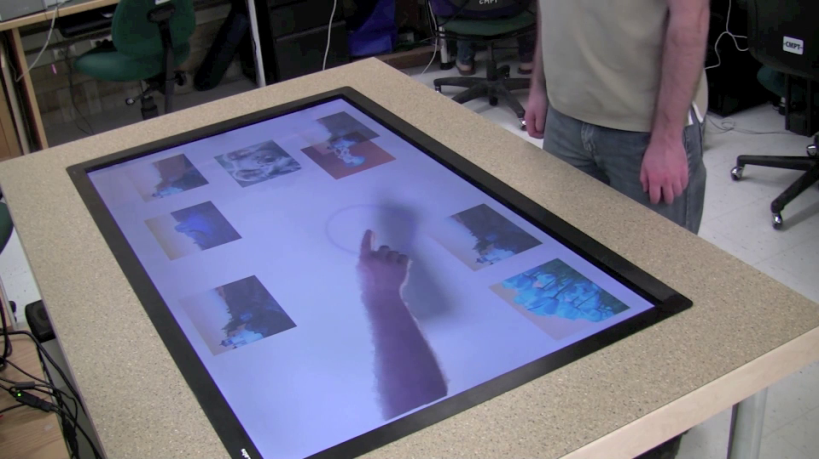

KinectArms on a distributed table

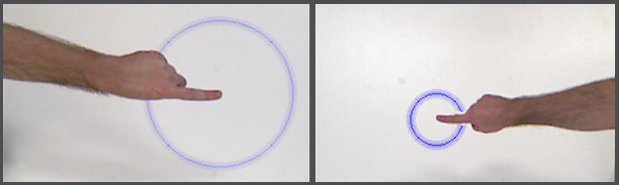

KinectArms example effects

Partners

NSERC – SurfNet

|

|